Day 2! We started bright and early with the first paper session at 8:30. Katherine, who I had met on Monday, was to kick-off the day in paper session 4.

Paper session #4 - Undergraduate Research Experiences

Katherine Izhikevich - Exploring Group Dynamics in a Group-Structured Computing Undergraduate Research Experience

You can find the work of Katherine and her colleagues here.

The title of the work is relatively self-explanatory, the idea was to find out how students who worked on research projects in groups, experienced their group dynamics. These were second year students, mentored in groups of 4. The experiences of the students were analyzed through a questionnaire with open questions and Likert scale questions, taken at two points during the course. 106 students filled in at least one questionnaire.

From the quantitative analysis, they found that group experiences were overwhelmingly positive. The qualitative analysis found that these experiences centered around three themes: group fit and belonging, emotional and academic support, and logistics. This last one includes division of labor, scheduling of meetings and communication.

In conclusion, group-based undergraduate research experiences seem feasible and have a positive impact.

Atira Nair and Dustin Palea - "It's usually not worth the effort unless you get really lucky": Barriers to Undergraduate Research Experiences from the Perspective of Computing Faculty

The second paper by Atira, Dustin and their colleagues can be found here.

The previous paper focused on the students' perspective on undergraduate research experiences, this paper is exploring the faculty perspective. The quote in the paper title was a good introduction, one that made several people in the room laugh and agree. It tells us much of the story that is held within!

So, to summarize the contribution: research experiences are valuable for the students' development, as well as for student retention. However, what do faculty think of it if they can just consider their own workload and efforts? To explore this, the authors ran an interview with 12 faculty, who each supported at least 1-5 students per period. They identified three misalignment during these experiences, between:

- Educational systems and research systems

- Students focus mostly on classes, but these classes do not teach the nature of research.

- Faculty have too much work already running their teaching load, not leaving time for running research experiences.

- There are no pipelines for research experiences: if the faculty does not teach undergrad, they have no way of reaching them for research experiences.

- Goals of undergrads and goals of faculty

- Undergrads want to do research experiences to fill their CV, whereas faculty would like the undergrads to contribute to research advancements

- Undergrads may want to explore research interests, but faculty may want to recruit for existing skill.

- Undergrads expectations of research and the realities of research

- Undergrads need supervision and structure, whereas research is unstructured and cannot always be planned.

- Faculty are not trained for mentoring to the extent that undergrads need this.

- Undergrads want to learn interesting skills, but much of research contains tedious tasks that are not so fun.

This last point specifically reminded me of the paper “Everyone wants to do the model work, not the data work”: Data Cascades in High-Stakes AI by Nithya Sambasivan et al. We have used this paper in our Knowledge Engineering course, to show our Data Science students that, although the model is important, the data is maybe even more important. And focusing on the data is essential in order to create systems that work correctly. Data work is perhaps more boring, according to some, but it is the absolute core to any conclusion being drawn from the data.

Lightning talks and posters

Then it was time for the final lightning talk and poster sessions, this time by the attendees of the doctoral symposium. There were two that specifically caught my attention.

The first was by Mohammed Hassan of University of Illinois, with work titled How do we Help Students "See the Forest from the Trees?". His proposal is to work on exploring the mental characteristics that come into play when learning to read code. He states that we are aware of several theories and techniques for reading and interpreting code, such as transitive inference, conservation and reversibility, but we do not know how to teach these things to students.

Mohammad's previous work has been very interesting as well, with a paper on reverse-tracing at ICER21 and a paper on debugging code at SIGCSE22. Unfortunately I was not able to speak with Muhammad at the poster, as it was incredibly busy and I joined the line too late. I should probably reach out to Mohammed and Craig to see what they think of applying these techniques to SQL!

The second talk and poster of interest was by Sanaa Algaraibeh of University of Idaho, titled Techniques for Enhancing Compiler Error Messages. She has analyzed learners' experiences with error messages, and has proposed a technique to parse source code in three phases (functions, function bodies, and line-by-line), such that the compiler can generate better syntax error messages. The example error message looks very nice, and made me curious to see more. Their next step is to evaluate this technique in the classroom. I was also not able to talk to Sanaa, and I should reach out to her too, given our current work on SQL parsing and error messages.

Paper session #5 - Groups and Teams

Our next paper session, just before lunch, was on how groups and teams (don't) work in the context of computer science courses.

Lauren Margulieux - Getting By With Help from My Friends: Group Study in Introductory Programming Understood as Socially Shared Regulation

This paper by Lauren and her colleagues can be found here.

The work focused on evaluating whether current models of self-regulation and co-regulation match students' expression of their learning strategies, and how these learning strategies relate to student performance. To do this, they used a repeated questionnaire (midpoint and end of the course) containing 8 questions, containing 3 quantitative and 1 qualitative question for both self-regulation and co-regulation.

From the qualitative questions, they identified a couple of themes that describe students experiences regarding co-regulation:

- Social help-seeking: asking a fellow student for hints on how to solve a problem.

- Group learning: bouncing ideas off eachother, and explaining how you solved a problem (and hearing from others) to learn more deeply.

- Socially shared regulation: ensuring no one is left behind, keeping each other on track.

- Learning through teaching: explaining concepts to others makes you learn in more depth.

This work reminds me of many things I have learnt during teacher training courses. They key idea is that active learning elements, where students communicate and reflect, may help them learn better. This seems to be represented from the students perspective as well in this paper.

Kai Presler-Marshall - What Makes Team[s] Work? A Study of Team Characteristics in Software Engineering Projects

This work by Kai and his colleagues can be found here.

The work is similar in nature to the work by Lauren and her colleagues, with as major differences that Kai and colleagues used interviews over questionnaires, and that Kai and colleagues analyzed a group project, instead of individual assignments worked on in a group. So, although both were examining how students worked in groups, the focus was different due to the different nature of the projects.

The idea behind this study is to analyze how students ran their teams, what their problems were, and how they were able to overcome these (or not).

They found there were three main challenges faced by their student teams: communication difficulties, time management and task planning. Teams were able to (partially) overcome such challenges through the application of self-reflection, such as identifying the need for more communication, or through external pressure such as deadlines and grade reduction. Successful teams were those who communicated, collaborated and held themselves accountable.

It seems that one of the most important characteristics of well-functioning teams is reflection. This is extra important in case of challenges. As such, Kai suggested that group projects should use tooling that can help teams track their issues and communication. This can help them give insights, and as such requires less reflection (which we know can be difficult for undergrads!)

Paper session #6 - Notional machines

After another plate of lovely Italian pasta for lunch, it was time for more paper presentations! The next session contained two papers again, both on notional machines.

Gayithri Jayathirtha - "How does the computer carry out DigitalRead()?" Notional Machines Mediated Learner Conceptual Agency within an Introductory High School Electronic Textiles Unit

You can find this work by Gayithri here.The title of this paper is a bit of a mouthful, but the core of it is that Gayithri examined notional machines that were used by highschool teachers in a course on electronic textiles. How do teachers apply these notional machines within the classroom, to help students learn?

To identify notional machines and their impact on learning, Gayithri observed 37 class periods at a public charter school. (One of the attendees helpfully shared the wikipedia article on Charter Schools for more information) Of the 37 class periods, she analyzed 8 periods that were heavy on discussion of programming concepts.

She found that students actively engage with the teacher's notional machine, questioning them, adopting them, and giving suggestions for similar mechanisms. Notional machines seem to have a mediating role in the classroom, and they help the class to make sense of the concepts together.

There was lots of discussion at the tables on whether these metaphors should be called notional machines, or that a notional machine is the model of the 'whole', and not just the parts. Via discord, we were presented with alternative terms from other areas of study as well, such as didactic reduction and boundary objects. Nevertheless, I found this methodology inspiring. Notional machines are still a very vague concept, but by identifying the metaphors used in the classroom, we can get closer to a concept representation that is accurate. I'd love to run a study like this for SQL commands, to see how various teachers explain these concepts!

John Clements - Towards a Notional Machine for Runtime Stacks and Scope: When Stacks Don't Stack Up

You can find the work by John and Shriram Krishnamurthi here.In the paper, they examine to what extent students in upper-level courses understand the concept of runtime stacks. To this end, they asked students to draw representations of pieces of code at some point during execution. They find that students have a very fragile understanding of stacks, and the knowledge they do have often does not generalize. One example of a problem they identified is in the characteristics of a stack: it can push and pop. However, in their explanations, the students never used pop!

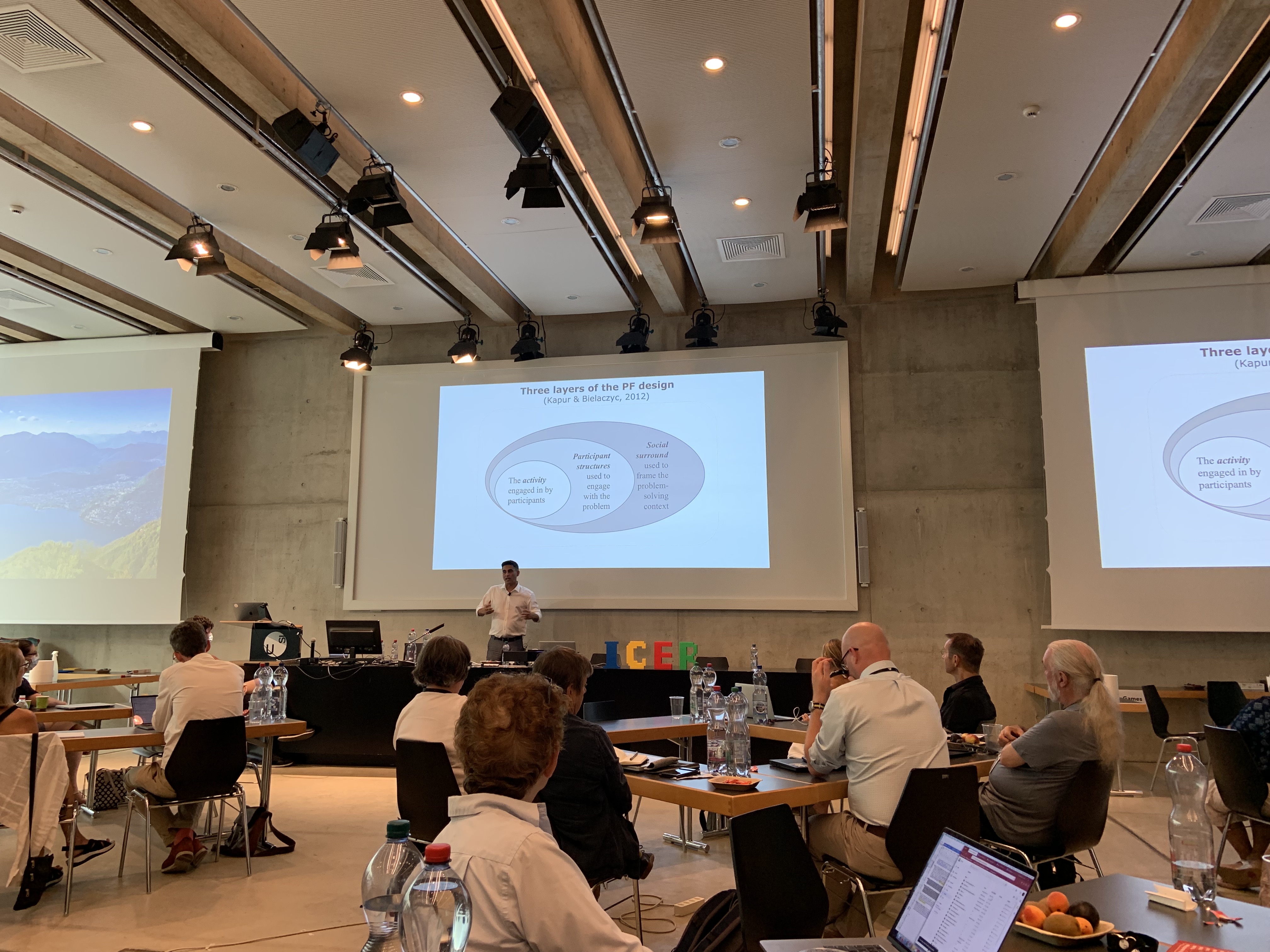

Manu presents the key aspects of productive failure at ICER.

Manu presents the key aspects of productive failure at ICER.

Keynote: Manu Kapur - Productive Failure

After another coffee break, it was time for one of the big events of the conference: the Keynote! It was planned in the middle of the conference, such that it was available to most of our virtual attendees as well.

The keynote was on Manu's key research direction, which is productive failure. To introduce the topic, he gave us a nice example. Suppose we have two groups of kids, in two different rooms. We're introducing a new toy to them, to play with. For the one group, we demonstrate how to play with the toy, for the other we will not. Which of these two groups will be more creative?

Of course, telling kids how to play with a toy boxes them in. They will rarely go beyond what you have shown them. However, this is exactly how we teach our students! This fails to develop creativity and flexibility in our student populations.

So, what is the alternative? We can design for students to fail through the concept of Productive Failure (PF). The opposite of this is our typical way of instructing: Direct Instruction (DI). Research has shown that Productive Failure is better than Direct Instruction for transfer and conceptual knowledge. If it is done correctly, it can be three times as effective as normal teaching. This can be reached with even a small intervention: a recent study of a 7 hour intervention (out of 200 hours in the course), improved passing rates significantly (a paper on this is on its way!).

However, you cannot randomly give students a task and let them fail. PF is about more than a difficult task. If a task is not engaging, students will not want to try it. The four keywords Manu used were activation, awareness, affect and assembly.

An interesting question from the audience, that I was also wondering about, is how to scale Productive Failure to more complex topics? Manu referred to the meta-analysis they had undertaken, where they found that PF does not work well for domain-general skills. What does this mean? Wikipedia tells me that domain-general skills involve memory development (association, generalization, recognition and recall), executive functions (working memory) and language (attention, perception, remembering). I'm not sure what to conclude from this.

On the other hand, the work by Phil Steinhorst, on Investigating Productive Failure in Computer Science, part of the Doctoral Consortium, did show the application of PF in more advanced courses. I talked to him a little bit at his poster on Monday, and they introduced single PF exercises in courses such as Operating Systems, and Pattern Recognition. Neither the poster, nor the abstract, had much information on what these exercises looked like, but perhaps I should dive a bit deeper in Phil's work to see if this could be applied for SQL as well. The biggest question I have is, (how) can you apply PF for language-related questions with a specific syntax?

Paper Session #7 - Teachers

The final session of the day was on Teachers and their backgrounds and emotions surrounding teaching.

Xiaohua Jia - Teaching Quality in Programming Education:: the Effect of Teachers’ Background Characteristics and Self-efficacy

You can find the work by Xiaohua and Felienne here.

In China, since a few years, programming languages and AI have been adopted as a core curriculum activity in primary and secondary school. There are no dedicated CS teachers, so STEM teachers are responsible for teaching these subjects. As they are not specifically educated for this job, it is interesting to examine what these teachers' background is, and which teaching practices they apply.

They found that close to forty percent of their participants had majored in CS, and 23 percent had prior experience with Scratch as language of instruction. But none of these had a statistically significant effect on teaching practices.

With regard to which teaching practices they applied, most practices were applied often or always by more than 50% of participants. The only practice that ran below 50% was I let students practice similar tasks until I know that every student has understood the subject matter. The most often applied practice was I set goals at the beginning of instruction.

Amy Ko - “I would be afraid to be a bad CS teacher”: Factors Influencing Participation in Pre-Service Secondary CS Teacher Education

This work by Jayne and Amy can be found here.

The question Jayne and Amy set out to answer is, what makes teachers decide to (not) become CS teachers? To this end they interviewed candidates in their Masters in Teaching program. Jayne herself was also in this program, which helped with recruiting and rapport, leading to high-quality data.

Ten candidates were interviewed, of whom five were interested to become a CS teacher, and the other five were not. In the interviews, they identified four groups of factors that influenced these decisions:

- Cost. The pre-service program was a funded opportunity, which was a plus for some. However, funding was limited, so candidates that were not fully committed were happy to leave the funding to their classmates. Another factor in this group is the job security: CS was seen as a field they could easily get a job in.

- Justice. A major factor here was to right historical wrongs: making CS available to kids, providing positive experiences with it. Also, CS skills can be seen as a form of literacy in this age of computers, something that everyone should have.

- Knowledge and belonging. One factor here was a prior interest in CS. On the other hand, there were fears such as lack of respect and self-efficacy, or the lack of a trusted mentor.

- Entering the profession. Students mentioned they were tired or burnt out from their studies during Covid, and as such did not want to take the pre-service program. They were also scared of the workload that comes with teaching too many courses (for example if they would teach both math and CS), or the lack of agency for what to teach. Finally, a very interesting factor to me was a worry about their limited capacity to care. If we relate back to the Justice factor, these students want to do the right thing. However, they feel that they might not be able to do this to the extent they would like!

An interesting question from the audience was regarding burnout. In the US, apparently there is typically only one (part-time) CS teacher per school. Being the only teacher likely also has an effect on burnout. How do they support for this? Amy mentioned the Computer Science Teachers Association (CSTA) that they try to interest the students for. Plus, the aim is to embed the students in their cohort, such that they can rely on one another.

The banquet venue at the top of Monte Bré. Photo credit to the ICER twitter account.

The banquet venue at the top of Monte Bré. Photo credit to the ICER twitter account.

Banquet at Monte Bré

After such an interesting close of the day, it was time for a little break, after which we had the banquet. I managed to quickly drop off my stuff at the hotel before we went up the mountain. It was incredibly hot, especially in the funicolare, but we were rewarded with nice food and a great view!

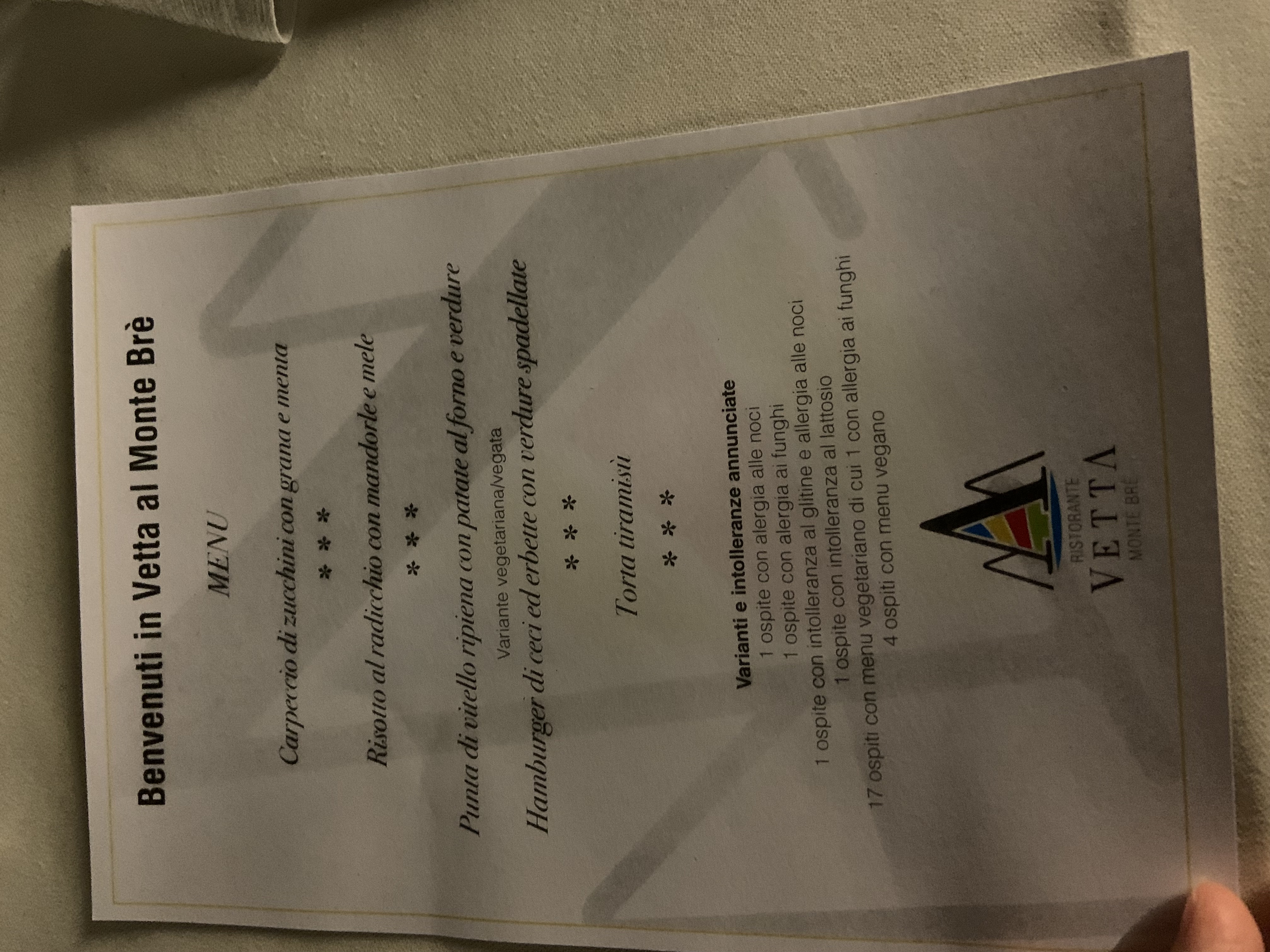

The lovely banquet menu.

The lovely banquet menu.

The sights at night were wonderful! Photo credit to Kai Presler-Marshall.

The sights at night were wonderful! Photo credit to Kai Presler-Marshall.